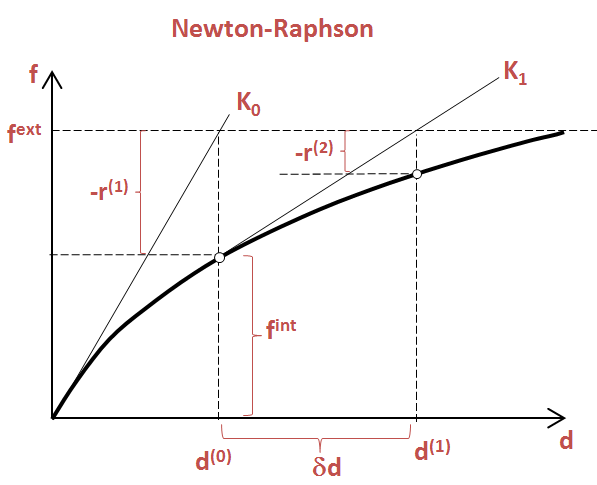

Hence the motivation for approximation methods, outlined in the section below. For these reasons, we will need some other methods of calculating derivatives as well. Moreover, the list index representation is unable to represent polynomials that include terms whose powers are not positive integers. While it’s great that we can calculate derivatives and integrals, one very obvious drawback of this direct approach is that we cannot deal with non-polynomial functions, such as exponentials or logarithms. For example, we can express $f(x) = x^3 - 20$ as The most obvious, simplest way of representing polynomials in Python is to simply use functions. For the sake of simplicity, let’s first just consider polynomials. Equation Representationīefore we move on, it’s first necessary to come up with a way of representing equations in Python. Specifically, this post will deal with mainly two methods of solving non-linear equations: the Newton-Raphson method and the secant method. After watching a few of his videos, I decided to implement some numerical methods algorithms in Python. His videos did not seem to assume much mathematical knowledge beyond basic high school calculus. While the videos themselves were recorded a while back in 2009 at just 240p, I found the contents of the video to be very intriguing and easily digestable. It was a channel called numericalmethodsguy, run by a professor of mechanical engineering at the University of Florida. If you don’t want to miss an update, suscribe to the mailing list.Recently, I ran into an interesting video on YouTube on numerical methods (at this pont, I can’t help but wonder if YouTube can read my mind, but now I digress). I will continue to post tutorials like this one, to discuss numerical algorithms and their implementation in the Julia programming language. It is straightforward to implement in Julia, and in combination with automatic differentiation provides a very useful tool to solve simple non-linear equations. The Newton-Raphson is one of the classical zero-finding algorithms. Now we can do the following: x = NewtonRaphson (f, 1.0 ) 3.597285023540418 Conclusion Note that this time, NewtonRaphson doesn’t explicitly depend on the derivative function. Now that we can differentiate functions automatically, we can extend our NewtonRaphson function with the definition: NewtonRaphson (f, x0, tol = 1e - 8, maxIter = 1e3 ) = NewtonRaphson (f, autodiff (f ), x0, tol, maxIter ) derivative (f, x ) # Test with our known function While this routine has been thoroughly tested, it’s considered good practice to run our own tests: using ForwardDiffĪutodiff (f ) = x - > ForwardDiff. While there are several packages within the Julia ecosystem that implement this functionality, ForwardDiff is one of the most straightforward and more than sufficient for our needs.įor example, to produce a derivative function, we can rely on the rivative routine. Example with automatic differentiationĪutomatic differentiation is a very interesting tool. The last alternative looks interesting, specially in the context of Julia, which has great tools for automatic differentiation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed